Artificial Intelligence runs all through the iPhone

Apple didn’t get to be the world biggest technology brand, worth over $1 trillion USD, by accident. And you simply cannot be a technology company, anywhere in the world, let alone the largest and anchored in Cupertino, California (Silicon Valley) without knowing a lot about Artificial Intelligence.

This year’s list of Apple’s devices, the 2018 iPhone X, iPhone X Max and iPhone XR all have dedicated microprocessors designed to perform AI related tasks, and a suite of software which delivers enhanced iPhone experiences, delivered through Machine Learning and Neural Processing algorithms.

When you see a list of songs you like, on the front page of the iTunes store, when your iPhone speaks to you like Siri and reels off a joke, in response to something you’ve asked it or, as you’ll see below, when your camera tracks the score in a basketball game, then you know you’re phones running AI.

The dedicated AI hardware in this year’s iPhone range

Here’s what you need to know about the ways Apple are using AI innovations to make their users love their iPhones just a little bit more.

A ‘Neural Engine’ built in to the main CPU: A Central Processing Unit, (CPU) as you probably know, is the ‘brain’ of your phone. It does all the calculations to make pixels light up and create an image on your phone’s screen, as well as executing most of the other actions you require of the device.

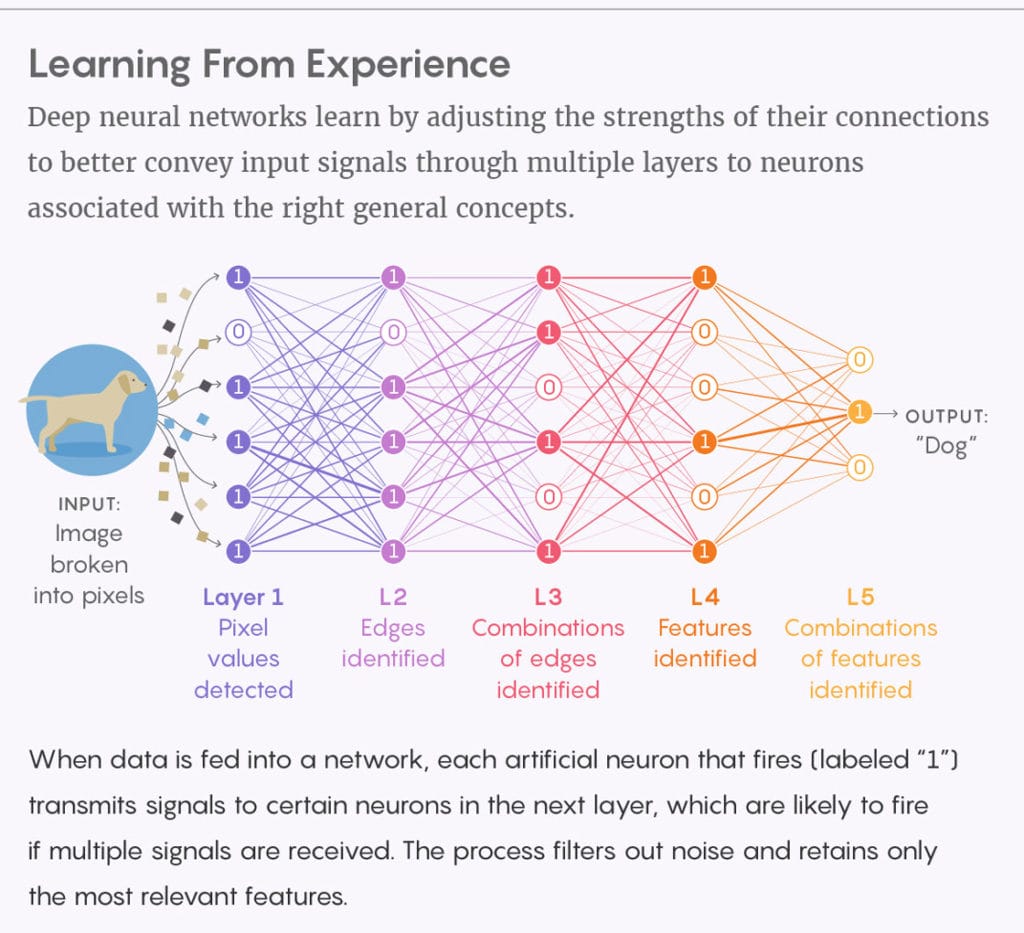

Image Source: Quantum magazine

Apple’s Neural Engine is a component of their in-house designed A12 processor. This isn’t the first time the ‘Bionic’ Neural capability has been around in an iPhone, they were inserted for the first time, last year, in the iPhone 8 range.

The Neural Engine is used for any tasks involving Machine Learning (ML) including Facial Recognition – now used to grant access to the X series, in place of the fingerprint checker.

This year’s processor can run through an incredible 5 Trillion operations per second.

Having a dedicated microprocessor, specifically optimized for ML tasks makes it more efficient. In fact, the new iPhone also has separate GPUs – Graphical Processing Units which also make it more efficient. Splitting the processors out like this allows Apple to extend the battery life of this years phone by between 30 minutes and 1 hour, despite adding features galore.

AI will deliver even better images from your iPhone’s camera

This year’s iPhone doesn’t take pictures in the classical sense. When the user presses the shutter, the phone ‘decides’ what it is looking at, for example, differentiating between a face and a scenery shot, and gathers data, through the lens, which is then worked on by Apple’s Neural Engine, to generate the best image possible.

On the X / X Max model, this new approach allows users to add depth of field after the image has been captured, for example.

Siri Suggestions

Siri Suggestions are unprompted recommendations from Siri which can improve your life. The capabilities have been around in iPhones since iOS 9 but this year sees them getting some extra grunt. They are, strictly speaking, a feature of iOS 12, the iPhone’s Operating System for handheld devices, rather than a new capability in this year’s iPhone. However, to most people, the distinction means little.

One example of a Siri Suggestion might be you noticing an automated reminder that it’s someone’s birthday, from Siri, without prompting. Over time, your interactions with Siri, will train Siri what you like and what you don’t. For example, if you always swipe away those reminders, Siri will take the hint and stop offering them up.

This feature has now been opened up to third parties who can ‘donate’ an action (like placing an order) to Siri – who subsequently reminds the user at times it might be relevant and executes the task if that’s required.

3rd party developers can now use the iPhone’s AI smarts

For the first time, this year, Apple have even opened their AI chips’ capabilities to their third party developer community – something they typically do when they want to accentuate the importance of new features, which have been built in to their devices. Developers can use Apple’s own Core ML framework to generate code which will use the chip efficiently.

Bringing it all together

Apple aren’t alone in betting big on Artificial Intelligence in their phones. In fact, many of the image processing tricks they’ve employed this year were included by Google in their Pixel phone. Apple’s core desire with the inclusion of AI at the heard of both it’s software and hardware roadmap seems to come from a desire to offer the most automated, personalized experience possible on their phones.

Ultimately, Apple’s goal is to extend what it showed with it’s Basketball monitoring app. Point the camera at a live basketball game and this year’s iPhone will count the goals as well as the misses and keep track of the game for you. It’s a ‘simple’ (if still incredible) example of a capability with an extremely limited domain – specifically, the sport of Basketball. Nevertheless, it shows what may be possible in future iPhones. With no effort on the part of the user, this year’s iPhone can recognize what’s happen (a game of basketball) and do something useful for the user (keep score) without being asked to.

Imagine if your phone could do that on your commute to work, ordering a coffee for you because it realized you did that at the same place and time most days. Texting your friend because it can tell you’re late for an appointment with them. Apple’s new AI capabilities in this year’s phones, openness to sharing the facility with third party developers and own investments in AI based software suggest that these sorts of services, and many more we cannot yet dream of, will soon be here – along with 5G, in the 2019 iPhones.

I really like it when individuals get together and share thoughts. Great website, stick with it!|

Hello excellent blog! Does running a blog similar to this take a large amount of work? I have absolutely no knowledge of coding however I had been hoping to start my own blog in the near future. Anyhow, if you have any recommendations or techniques for new blog owners please share. I know this is off subject nevertheless I just had to ask. Thanks!|

Hey! I’m at work browsing your blog from my new apple iphone! Just wanted to say I love reading your blog and look forward to all your posts! Keep up the excellent work!|